STaR-GATE: Teaching Language Models to Ask Clarifying Questions

Abstract

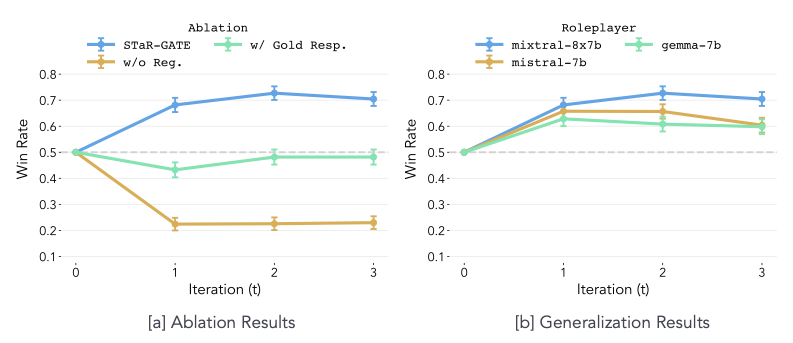

When prompting language models to complete a task, users often leave important aspects unsaid. While asking questions could resolve this ambiguity (GATE; Li et al., 2023), models often struggle to ask good questions. We explore a language model’s ability to self-improve (STaR; Zelikman et al., 2022) by rewarding the model for generating useful questions-a simple method we dub STaR-GATE. We generate a synthetic dataset of 25,500 unique persona-task prompts to simulate conversations between a pretrained language model-the Questioner-and a Roleplayer whose preferences are unknown to the Questioner. By asking questions, the Questioner elicits preferences from the Roleplayer. The Questioner is iteratively finetuned on questions that increase the probability of high-quality responses to the task, which are generated by an Oracle with access to the Roleplayer’s latent preferences. After two iterations of self-improvement, the Questioner asks better questions, allowing it to generate responses that are preferred over responses from the initial model on 72% of tasks. Our results indicate that teaching a language model to ask better questions leads to better personalized responses.

BibTeX

@misc{andukuri2024stargate,

title={STaR-GATE: Teaching Language Models to Ask Clarifying Questions},

author={Chinmaya Andukuri and Jan-Philipp Fränken and Tobias Gerstenberg and Noah D. Goodman},

year={2024},

eprint={2403.19154},

archivePrefix={arXiv},

primaryClass={cs.CL}

}